A research project funded by the Leverhulme Trust (2006 – September 2008)

Contributors: Rolf Haenni Solar, Jan-Willem Romeijn, Gregory Wheeler, Jon Williamson

If we wish to reason more effectively, we can draw on both probability theory and deductive logic to offer guidance. However, these are very different formalisms: deductive logic tells us how the structure of sentences can be exploited to draw conclusions while probability theory tells us how uncertainties interact. For example, deductive logic tells us that from “Jack has bronchitis” and “if Jack has bronchitis then Jack has a cough” we can conclude “Jack has a cough”. Probability theory can be used to tell us the probability that “Jack has bronchitis given that he has a cough” if we know the relative incidences of this symptom and disease in the population.

A probabilistic logic offers a richer formalism, one that combines the capacity of probability theory to handle uncertainty with the capacity of deductive logic to exploit structure. Potential applications are numerous and are to be found in disciplines as diverse as the philosophy of science (where we need to model theory choice and theory change, and to understand statistical methodology), artificial intelligence (where computers need to combine statistics with structural knowledge in order to forecast the weather for instance), bioinformatics (where we need to combine deductive reasoning about chemical structure with statistical reasoning about observed biological characteristics) and legal argumentation (where we would like to model legal theory formation from case law). In each of these problem domains probabilistic information and structural knowledge needs to be combined and a probabilistic logic offers a framework for handling this combination.

The difficulty with probabilistic logics is that they tend to multiply the complexities of their probabilistic and logical components. Probabilistic logics can be hard to understand, and inference using probabilistic logics can be time-consuming and complex. In probability theory, probabilistic networks (including what are known as Bayesian nets and credal nets) have been developed to simplify the task of probabilistic reasoning. These nets can afford a simple representation of complex problems and can be used to dramatically speed-up the time it takes to perform calculations.

The goal of this project is to investigate the application of probabilistic networks to probabilistic logic. If successful, probabilistic networks could render probabilistic logic simpler and more perspicuous and could render applications of probabilitic logic feasible at last.

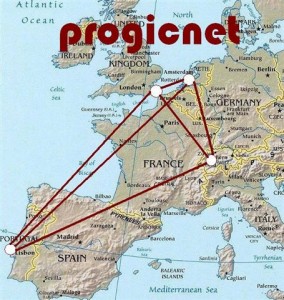

This project is an academic network, running from 2006-2008.

WritingsAn executive summary of our programme:Jon Williamson: A note on probabilistic logics and probabilistic networks, The Reasoner 2(5), 2008. Our magnum opus:Rolf Haenni, Jan-Willem Romeijn, Gregory Wheeler and Jon Williamson: Probabilistic logics and probabilistic networks, Synthese Library, Springer, to appear.

A collection on probabilistic logic and probabilistic networks:Fabio Cozman, Rolf Haenni, Jan-Willem Romeijn, Federica Russo, Gregory Wheeler & Jon Williamson (eds): Combining probability and logic, Special Issue, Journal of Applied Logic 7(2), 2009; Editorial: An application of our approach to psychology:Jan-Willem Romeijn, Rolf Haenni, Gregory Wheeler and Jon Williamson: Logical Relations in a Statistical Problem, to appear in B. Lowe, E. Pacuit & J.W. Romeijn (eds), FotFS’07: Foundations of the Formal Sciences VI, Reasoning about Probabilities and Probabilistic Reasoning, London: College Publications 2008.

Other progicnet papers:Rolf Haenni, Jan-Willem Romeijn, Gregory Wheeler and Jon Williamson: Possible Semantics for a Common Framework of Probabilistic Logics, In V. N. Huynh (ed.): Interval / Probabilistic Uncertainty and Non-Classical Logics, Advances in Soft Computing Series, Springer 2008, pp. 268-279.

Rolf Haenni: Probabilistic argumentation, Journal of Applied Logic, in press.

Rolf Haenni: Climbing the Hills of Compiled Credal Networks, in G. de Cooman, J. Vejnarová, and M. Zaffalon (editors), ISIPTA’07, 5th International Symposium on Imprecise Probabilities and Their Applications, pp. 213-222, 2007.

William Harper and Gragory Wheeler (eds): Probability and Inference: Essays in Honour of Henry E. Kyburg, College Publications, 2007. Amazon.co.uk

Jan-Willem Romeijn: The All-Too-Flexible Abductive Method: ATOM’s Normative Status, Journal of Clinical Psychology, 2008, to appear. Jan-Willem Romeijn and Igor Douven: The Discursive Dilemma as a Lottery Paradox, Economics and Philosophy, 23(3), pp. 301-319, 2007.

Jan-Willem Romeijn, D. Borsboom, and J.M. Wicherts: Measurement Invariance versus Selection Invariance: Is fair selection possible?, Psychological Methods, in press.

Jan-Willem Romeijn, I. Douven and L. Horsten: Anti-realist Truth, under submission.

Jan-Willem Romeijn and R. van de Schoot: A philosophical analysis of Bayesian model selection for inequality constrained models, in Null, Alternative and Informative Hypotheses, Hoijtink, Klugkist and Boelen (eds.), to appear. Michael Wachter and Rolf Haenni: Logical compilation of Bayesian networks with Discrete Variables, in K. Mellouli (ed.), ECSQARU’07, 9th European Conference on Symbolic and Quantitative Approaches to Reasoning under Uncertainty, pp. 536-547, LNAI 4724, 2007.

Michael Wachter and Rolf Haenni: Propositional DAGs: a New Graph-Based Language for Representing Boolean Functions, In P. Doherty, J. Mylopoulos, and C. Welty (eds), KR’06, 10th International Conference on Principles of Knowledge Representation and Reasoning, pp. 277-285, 2006. AAAI Press.

M. Wachter and R. Haenni: Multi-State Directed Acyclic Graphs, In Z. Kobti and D. Wu (eds), CanAI’07, 20th Canadian Conference on Artificial Intelligence, pp. 464-475, LNAI 4509, 2007.

M. Wachter, R. Haenni and M. Pouly: Optimizing Inference in Bayesian Networks and Semiring Valuation Algebras, in A. Gelbukh and A. F. Kuri Morales (eds), MICAI’07: 6th Mexican International Conference on Artificial Intelligence, pp. 236-247, LNAI 4827, 2007.

Gregory Wheeler: Applied logic without psychologism, Studia Logica, 88(1): 137-156, 2008.

Gregory Wheeler: Two puzzles concerning measures of uncertainty and the positive Boolean connectives, in Proceedings of the 13th Portuguese Conference on Artificial Intelligence (EPIA 2007), Guimaraes, LNAI Series, Berlin: Springer-Verlag, 2007

Gregory Wheeler: Focused Correlation and Confirmation, British Journal for the Philosophy of Science, in press.

Gregory Wheeler & Luís Moniz Pereira: Methodological naturalism and epistemic internalism, Synthese, 163(3), pp. 315-328, 2008.

Gregory Wheeer & Jon Williamson: Evidential probability and objective Bayesian epistemology, in Prasanta S. Bandyopadhyay & Malcolm Forster (eds): Handbook of the philosophy of statistics, Elsevier 2009.

Jon Williamson: Objective Bayesian probabilistic logic, Journal of Algorithms in Cognition, Informatics and Logic 63: 167-183.

Jon Williamson: Aggregating judgements by merging evidence, Journal of Logic and Computation, in press.

Jon Williamson: Inductive influence, British Journal for the Philosophy of Science, 58, pp. 689-708, 2007;

Jon Williamson: Objective Bayesianism with predicate languages, Synthese 163 (3), pp. 341-356, 2008;

Jon Williamson: Epistemic complexity from an objective Bayesian perspective, in A. Carsetti (ed.) `Causality, meaningful complexity and knowledge construction’, Springer, in press;

|

ActivitiesWorkshop: Foundations of Formal Sciences: Reasoning about probabilities and probabilistic reasoning. May 2-5 2007, Amsterdam. TalksEuropean Summer School in Logic, Language and Information, 4-15 August 2008, Hamburg. Course page. |

Related Work

Rolf Haenni and Stephan Hartmann: Modeling Partially Reliable Information Sources: a General Approach based on Dempster-Shafer Theory, Information Fusion, 7(4): pp. 361-379, 2006. ![]()

Combining testimonial reports from independent and partially reliable information sources is an important epistemological problem of uncertain reasoning. Within the framework of Dempster-Shafer theory, we propose a general model of partially reliable sources, which includes several previously known results as special cases. The paper reproduces these results on the basis of a comprehensive model taxonomy. This gives a number of new insights and thereby contributes to a better understanding of this important application of reasoning with uncertain and incomplete information.

Henry E. Kyburg Jr., Choh Man Teng, and Gregory Wheeler: Conditionals and consequences. Journal of Applied Logic. ![]()

We examine the notion of conditionals and the role of conditionals in inductive logics and arguments. We identify three mistakes commonly made in the study of, or motivation for, non-classical logics. A nonmonotonic consequence relation based on evidential probability is formulated. With respect to this acceptance relation some rules of inference of System P are unsound, and we propose refinements that hold in our framework.

Gregory Wheeler: Rational acceptance and conjunctive / disjunctive absorption. Journal of Logic, Language and Information 15(1-2), pp. 49-63.A bounded formula is a pair consisting of a propositional formula phi in the first coordinate and a real number within the unit interval in the second coordinate, interpreted to express the lower-bound probability of phi. Converting conjunctive disjunctive combinations of bounded formulas to a single bounded formula consisting of the conjunction/disjunction of the propositions occurring in the collection along with a newly calculated lower probability is called absorption. This paper introduces two inference rules for effecting conjunctive and disjunctive absorption and compares the resulting logical system, called System Y, to axiom System P. Finally, we demonstrate how absorption resolves the lottery paradox and the paradox of the preference.

Jon Williamson: Bayesian networks for logical reasoning, in Carla Gomes & Toby Walsh (eds) [2001]: Proceedings of the AAAI Fall Symposium on using Uncertainty within Computation, AAAI Press Technical Report FS-01-04, pp. 136-143. ![]()

By identifying and pursuing analogies between causal and logical influence I show how the Bayesian network formalism can be applied to reasoning about logical deductions.

Jon Williamson: Probability logic, in Dov Gabbay, Ralph Johnson, Hans Jurgen Ohlbach & John Woods (eds)[2002]: Handbook of the Logic of Inference and Argument: The Turn Toward the Practical, Studies in Logic and Practical Reasoning Volume 1, Elsevier, pp. 397-424. ![]()

I examine the idea of incorporating probability into logic for a logic of practical reasoning. I introduce probability and its interpretations, give an account of the development of the logical approach to probability, its immediate problems, and improved formulations. Then I discuss inference in probabilistic logic, and propose the use of Bayesian networks for inference in both causal logics and proof planning.

Jon Williamson: Bayesian nets and causality: philosophical and computational foundations, Oxford University Press (UK, US) 2005. Preface, Reviews & Errata

Bayesian nets are widely used in artificial intelligence as a calculus for casual reasoning, enabling machines to make predictions, perform diagnoses, take decisions and even to discover casual relationships. This book, aimed at researchers and graduate students in computer science, mathematics and philosophy, brings together two important research topics: how to automate reasoning in artificial intelligence, and the nature of causality and probability in philosophy.

Links

Probabilistic Logic on Wikipedia

The Reasoner – a gazette on reasoning, inference and method.

Acknowledgements

We are very grateful to the The Leverhulme Trust for providing financial support. We are also grateful to the Netherlands Organisation for Scientific Research (NWO) for supporting a related project “Probabilistic models of scientific reasoning”.